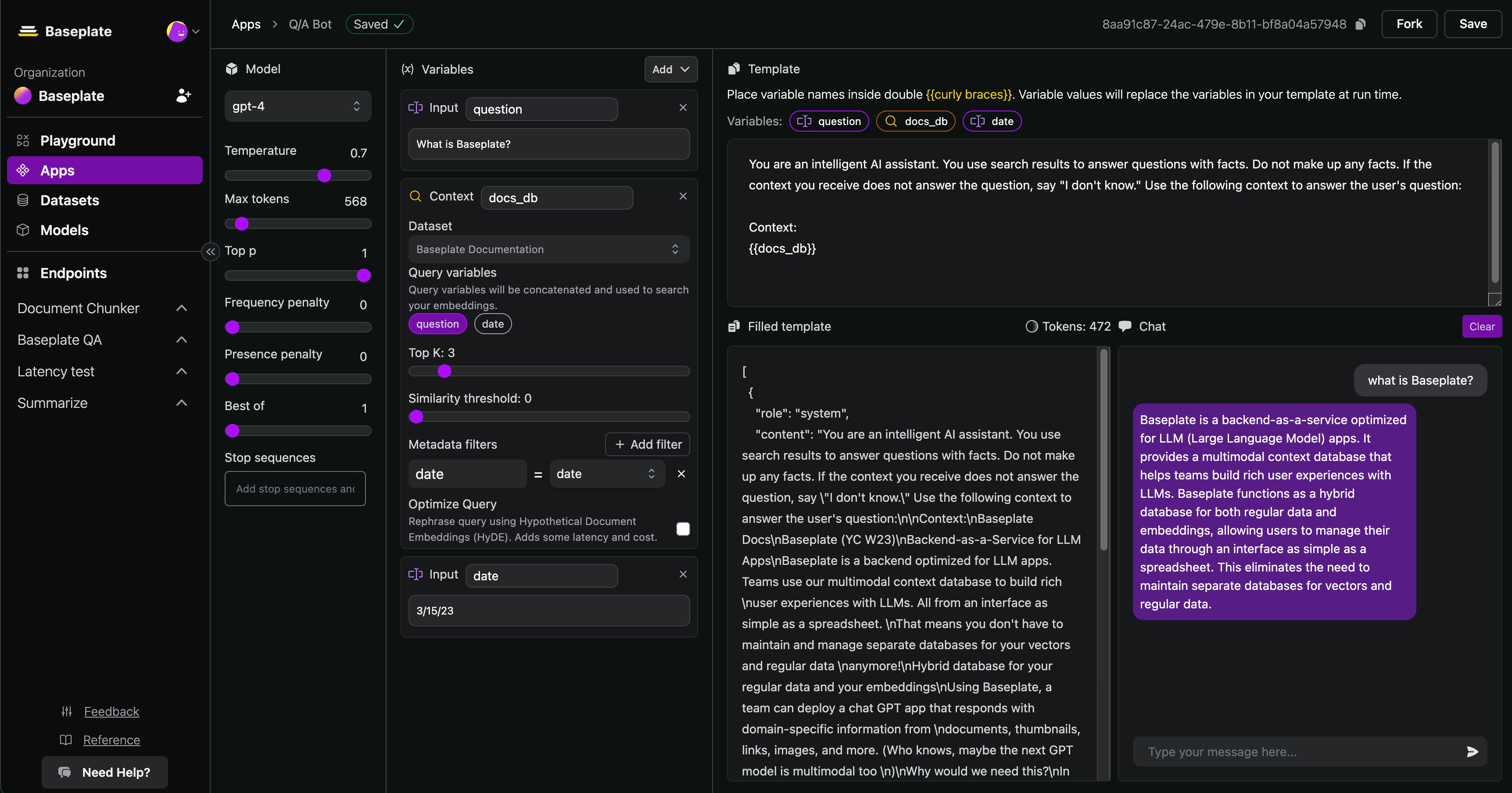

Navigate to the Apps tab to build reusable prompt templates that you can use in multiple projects. You LLM Apps might need to connect to an external datasource. Apps integrate closely with datasets so you easily inject context into your prompts. Apps take variables - e.g. a customer’s name or question, and use them to format a prompt. This creates a super flexible way to wrap unstructured user inputs in prompts that you’ve designed. Apps also use variables to find the right content from your Baseplate Database.Documentation Index

Fetch the complete documentation index at: https://docs.baseplate.ai/llms.txt

Use this file to discover all available pages before exploring further.

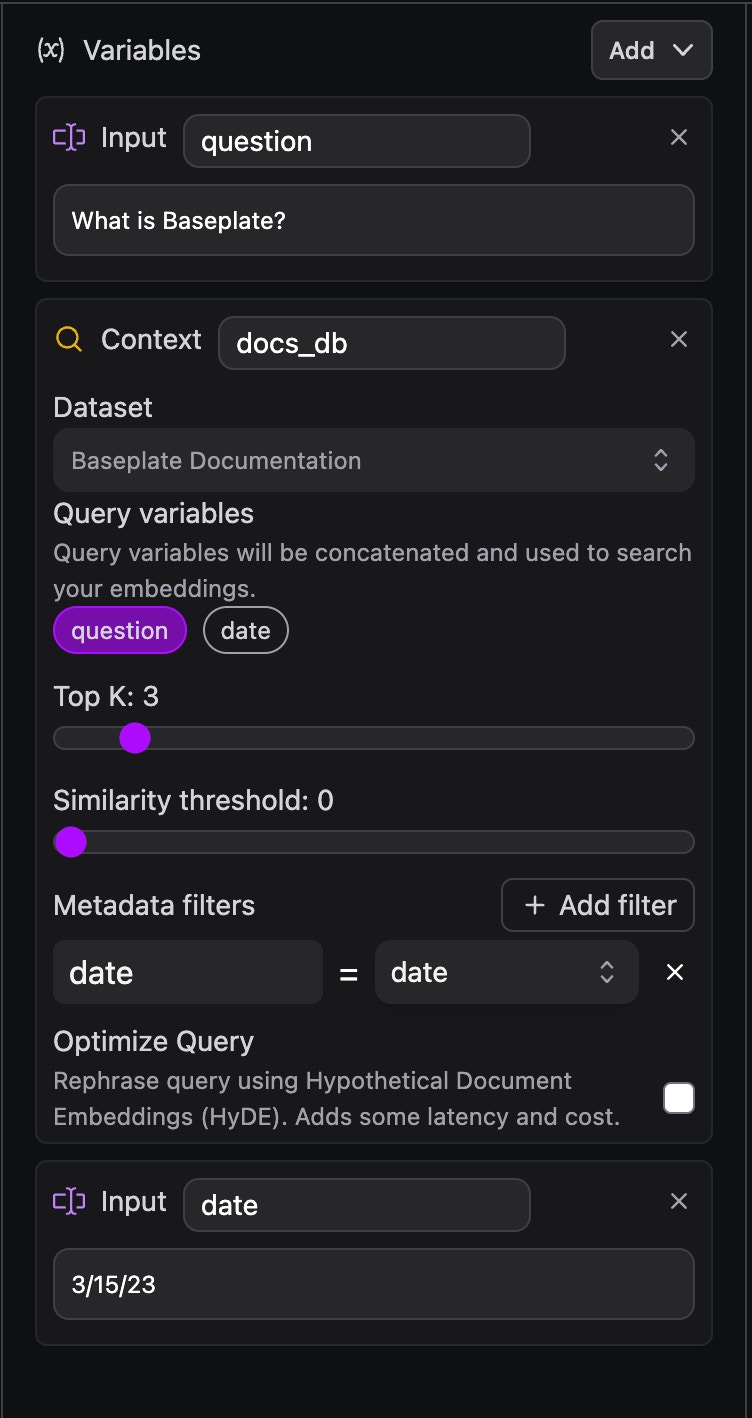

- Add Variables using the + button, or by pressing enter. Name the variable with a simple, one-word title if possible.

- Add your variables into your prompt template. This tells us where to put the user provided values by putting the name of each form field inside double curly brackets, e.g .

Add Search Functionality to Reference a Database

Apps need to search through your content database. When you click “Configure Embedding Search” you’ll see your search options. This is where you can configure search to meet your specific use case.- Choose a database

- Choose your search variables. Your search variables will be embedded by the model used in you dataset.

- Choose the number of rows to return

- Choose a minimum similarity. This lets you filter out low confidence results, and prevent hallucination.

- Add metadata filters. The filters allow you to specify specific rows of your data based on custom properties.

- (Team Plan) For Team Plan customers using fine-tuned hybrid search, the alpha parameter gives you additional controls to improve results.

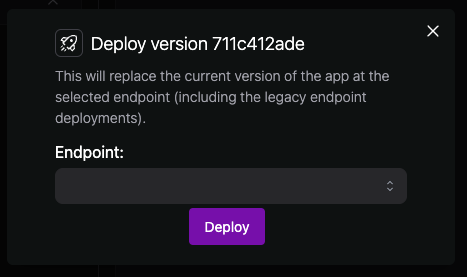

Deploy Your App

When you’re satisfied, commit your app. Committing your app creates a read-only version that is safe to deploy. This prevents accidents from happening in production.- Click commit at the top right of your App

- Once your app is committed, you should see a “Deploy” button

- Deploy the App to your endpoint. The endpoint will contain API instructions, Logs, and Deployment History.